Artificial intelligence explained in 60 seconds: ideas that changed the world

AI has wide-ranging applications but some experts are warning of potential ‘pitfalls’

In this series, The Week looks at the ideas and innovations that permanently changed the way we see the world.

Artificial intelligence in 60 seconds

Artificial intelligence (AI), sometimes referred to as machine intelligence, is intelligence demonstrated by machines, in contrast to the natural intelligence of humans.

AI is the ability of a computer program or a machine to think and learn, so that it can work on its own without being encoded with commands. The term was first coined by American computer scientist John McCarthy in 1955.

Subscribe to The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

Human intelligence is "the combination of many diverse abilities", said Encyclopaedia Britannica. AI, it says, has "focused chiefly on the following components of intelligence: learning, reasoning, problem solving, perception and using language".

AI is currently used for understanding human speech, competing in game systems such as chess and go, self-driving cars and interpreting complex data.

Some people are wary of the rise of artificial intelligence, with the New Yorker highlighting that "a number of scientists and engineers fear that, once we build an artificial intelligence smarter than we are, a form of AI known as artificial general intelligence (AGI), doomsday may follow".

In "The Age of Spiritual Machine", American inventor and futurist Ray Kurzweil writes that as AI develops and machines have the capacity to learn more quickly they "will appear to have their own free will", while Stephen Hawking declared that AGI will be "either the best, or the worst thing, ever to happen to humanity".

How did it develop?

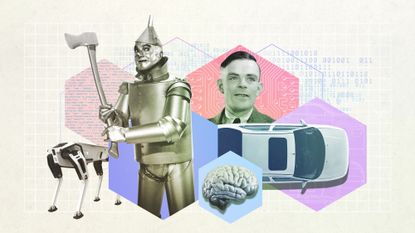

The Tin Man from "The Wizard of Oz" also represented people’s fascination with robotic intelligence, with the humanoid robot that impersonated Maria in Metropolis also displaying characteristics of AI.

But in the real world, the British computer scientist Alan Turing published a paper in 1950 in which he argued that a thinking machine was actually possible.

The first actual AI research began following a conference at Dartmouth College, USA in 1956.

In 1955, John McCarthy set about organising what would become the Dartmouth Summer Research Project on Artificial Intelligence. The conference took the form of a six- to eight-week brainstorming session, with attendees including scientists and mathematicians with an interest in AI.

According to "AI: The Tumultuous History of the Search for Artificial Intelligence" by Canadian researcher Daniel Crevier, one attendee at the conference wrote "within a generation... the problem of creating 'artificial intelligence' will substantially be solved".

It was at the conference that McCarthy was credited with first using the phrase "artificial intelligence".

Following the conference, science website Live Science reports that the US Department of Defense became interested in AI, but "after several reports criticising progress in AI, government funding and interest in the field dropped off". The period from 1974 to 1980, it says, became known as the "AI winter".

Interest in AI was revived in the 1980s, when the British government started funding it again in part to compete with efforts by the Japanese. From 1982-1990, the Japanese government invested $400m with the goal of revolutionising computer processing and improving artificial intelligence, according to Harvard University research.

Research into the field started to increase and by the 1990s many of the landmark goals of artificial intelligence had been achieved. In 1997, IBM’s Deep Blue became the first computer to beat a chess champion when it defeated Russian grandmaster Garry Kasparov.

This was surpassed in 2011, when IBM’s question-answering system Watson won the US quiz show "Jeopardy!" by beating the show’s reigning champions Brad Rutter and Ken Jennings.

In 2012, a talking computer "chatbot" called Eugene Goostman tricked judges into believing that it was human in a "Turing Test". This was devised by Turing in the 1950s. He thought that if a human could not tell the difference between another human and a computer, that computer must be as intelligent as a human.

ChatGPT, a new chatbot, has provoked much discussion in recent months, intensifying debate over the advantages and disadvantages of AI. It can "write essays, scripts, poems, and solve computer coding in a human-like way", said the BBC, and "it can even have conversations and admit mistakes".

Forbes highlights that AI is currently being deployed in services such as mobile phones (for example, Apple’s Siri app), Amazon's Alexa, self-driving Tesla cars and Netflix’s film recommendation service.

Massachusetts Institute of Technology Computer Science and Artificial Intelligence Lab has developed an AI model that can work out the exact amount of chemotherapy a cancer needs to shrink a brain tumour.

How did it change the world?

AI is already changing the world and looks set to define the future too.

According to Harvard researchers, we can expect to see AI-powered driverless cars on the road within the next 20 years, while machine calling is already a day-to-day reality.

Looking beyond driverless cars, the ultimate ambition is general intelligence – that is a machine that surpasses human cognitive abilities in all tasks. If this is developed a future of humanoid robots is not impossible to envision.

Although, as the likes of Stephen Hawking have warned, some fear the rise of an AI-dominated future.

Tech entrepreneur Elon Musk has warned that AI could become "an immortal dictator from which we would never escape", signing a letter alongside Hawking and a number of AI experts calling for research into the potential "pitfalls" and societal impacts of widespread AI use.

Create an account with the same email registered to your subscription to unlock access.

Sign up for Today's Best Articles in your inbox

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

Joe Evans is the world news editor at TheWeek.co.uk. He joined the team in 2019 and held roles including deputy news editor and acting news editor before moving into his current position in early 2021. He is a regular panellist on The Week Unwrapped podcast, discussing politics and foreign affairs.

Before joining The Week, he worked as a freelance journalist covering the UK and Ireland for German newspapers and magazines. A series of features on Brexit and the Irish border got him nominated for the Hostwriter Prize in 2019. Prior to settling down in London, he lived and worked in Cambodia, where he ran communications for a non-governmental organisation and worked as a journalist covering Southeast Asia. He has a master’s degree in journalism from City, University of London, and before that studied English Literature at the University of Manchester.

-

Dentures and witch gear left at hotels

Dentures and witch gear left at hotelsTall Tales And other stories from the stranger side of life

By Chas Newkey-Burden, The Week UK Published

-

AI puts fortune tellers out of business

AI puts fortune tellers out of businessfeature And other stories from the stranger side of life

By Chas Newkey-Burden Published

-

Archaeologist survived on ‘rhino urine’ tea

Archaeologist survived on ‘rhino urine’ teafeature And other stories from the stranger side of life

By Chas Newkey-Burden Published

-

TikToker turns up to his own funeral

TikToker turns up to his own funeralfeature And other stories from the stranger side of life

By Chas Newkey-Burden Published

-

Company teaches mask-wearers to smile again

Company teaches mask-wearers to smile againfeature And other stories from the stranger side of life

By Chas Newkey-Burden Published

-

Hitler speeches broadcast in Austrian train

Hitler speeches broadcast in Austrian trainfeature And other stories from the stranger side of life

By Chas Newkey-Burden Published

-

Unicycle, fake blood and self-respect left in Ubers

Unicycle, fake blood and self-respect left in Ubersfeature And other stories from the stranger side of life

By Chas Newkey-Burden Published

-

The Week Unwrapped: AI in court, Germans in Taiwan and ghostwriters

The Week Unwrapped: AI in court, Germans in Taiwan and ghostwriterspodcast Could artificial intelligence replace lawyers? What does Taiwan want from Germany? And are ghostwriters becoming less ghostly?

By The Week Staff Published