Is facial recognition technology racist?

Concerns are expressed over Amazon face ID system that confused 28 members of the US Congress with police suspects

Facial recognition technology is an increasingly common part of modern life, used for everything from unlocking iPhones to advanced surveillance technology. But concerns are being raised that face ID technology can exhibit racial biases and this has potentially serious ramifications.

Commercial face recognition software has repeatedly been shown to be “less accurate” on people with darker skin, writes Gizmodo. Civil rights advocates worry about the “disturbingly targeted” ways face-scanning can be used by police.

The most recent example of this involved Amazon and its new technology Rekognition.

Subscribe to The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

The facial recognition tool, which Amazon sells to web developers, wrongly identified 28 members of the US Congress – a disproportionate amount of them people of colour – as police suspects from mugshots, reports Reuters.

This is not the first such incident of this kind. As face ID technology becomes increasingly widespread, more and more companies have found that their algorithms have a racial bias.

Earlier this year, Google came under fire for failing to entirely fix a racist algorithm that was originally pointed out in 2015 by software engineer Jacky Alciné. He noticed that the image recognition algorithms in Google Photos were classifying his black friends as gorillas. Instead of fixing its facial recognition technology, Google blocked its image recognition algorithms from identifying gorillas altogether.

A similar issue occurred with Apple’s new face ID technique for unlocking its phones.

Last December, it was found that Apple’s Face ID tech couldn’t tell two Chinese women apart. Apple boasts that its Face ID technology is the most advanced in the world and says the probability that a random person could successfully use it to unlock a smartphone is “approximately 1 in 1,000,000”, according to the Inquirer. But the company was forced to issue a refund to a Chinese woman who reported that her co-worker was able to unlock her iPhone X using the face-scanning tech, “despite having reconfigured the facial recognition settings multiple times”.

The woman, known as Yan, was issued a refund and given a new phone – but encountered the same problem again.

This begs the question: why do these issues occur and can they be solved?

Gizmodo reports that MIT researchers Joy Buolamwini and Timnit Gebru have found that darker-skinned faces are “underrepresented” in the datasets used to train them. This leaves facial recognition “more inaccurate” when looking at dark faces.

Solving the issues of racial bias will require not only technical interventions, but also “hard limits” on how and when face-scanning can be used to protect vulnerable communities.

Even then, they say, face recognition will be “impossible without addressing racism in the criminal justice system it will inevitably be used in”.

Create an account with the same email registered to your subscription to unlock access.

Sign up for Today's Best Articles in your inbox

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

-

Biden poised to ease marijuana restrictions

Biden poised to ease marijuana restrictionsSpeed Read The move will reclassify it as a less dangerous drug

By Rafi Schwartz, The Week US Published

-

A history of student protest at Columbia University

A history of student protest at Columbia UniversityThe Explainer Anti-Israel demonstrations at NYC's Ivy League university echo protests against Vietnam War and South African apartheid

By Harriet Marsden, The Week UK Published

-

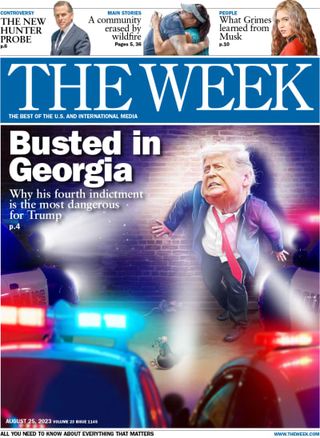

'Trump is ruled in contempt'

'Trump is ruled in contempt'Today's Newspapers A roundup of the headlines from the US front pages

By The Week Staff Published