Stephen Hawking: humanity could be destroyed by AI

Developers and lawmakers must focus on ‘maximising’ the technology’s benefits to society

Stephen Hawking has warned that artificial intelligence (AI) could destroy mankind unless we take action to avoid the risks it poses.

Speaking at this year’s Web Summit in Portugal, the physicist said that along with benefits, the technology also brings “dangers like powerful autonomous weapons, or new ways for the few to oppress the many”.

In quotes reported by Forbes, he continued: “Success in creating effective AI could be the biggest event in the history of our civilisation. Or the worst. We just don’t know.”

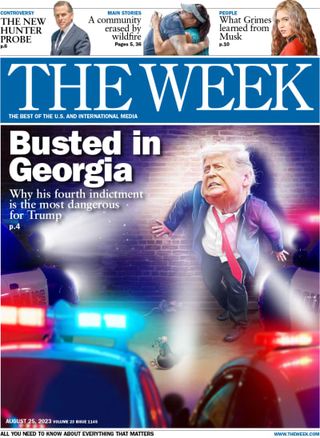

Subscribe to The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

Hawking proposed that humanity could prevent AI from threatening our existence by regulating its development.

“Perhaps we should all stop for a moment and focus not only on making our AI better and more successful, but also on the benefit of humanity,” he added.

His comments come less than three months after Elon Musk, founder of Tesla and SpaceX, said AI was “vastly more risky” than the threat of a nuclear attack from North Korea.

Musk previously told a panel of US state politicians that “until people see robots going down the street killing people, they don’t know how to react, because it seems so ethereal”.

Hawking praised moves in Europe to regulate new technologies, reports CNBC, particularly proposals put forward by lawmakers earlier this year to establish “new rules around AI and robotics”.

Elon Musk says artificial intelligence is more dangerous than war with North Korea

15 August

Tesla and SpaceX founder Elon Musk says that artificial intelligence (AI) is "vastly more risky" than the threat of an attack from North Korea.

In the wake of growing tensions between North Korea and the US, Musk said in a tweet: "If you're not concerned about AI safety, you should be."

This isn't the first time the South Africa-born inventor has expressed his concerns about AI technology.

Speaking to an audience of US state politicians in Rhode Island last month, Musk said: "Until people see robots going down the street killing people, they don't know how to react because it seems so ethereal."

To avoid AI becoming a threat to humanity, he says the US government should "learn as much as possible" and "gain insight" into how the technology works. It should also bring in regulations to ensure companies develop AI safely.

While the inventor concedes that "nobody likes being regulated", he says that everything else that's a "danger to the public is regulated" and that AI "should be too."

Musk's remarks come at the same time as artificial intelligence, developed by his OpenAI company, successfully defeated some of the world's top players on the computer game Dota 2, reports The Guardian.

The system "managed to win all its 1-v-1 games at the International Dota 2 championships against many of the world's best players competing for a $24.8m (£19m) prize fund."

Elon Musk calls for AI regulation before 'it's too late'

18 July

SpaceX and Tesla founder Elon Musk has called for the development of artificial intelligence (AI) to be regulated before "it's too late".

Speaking at a meeting of US state politicians in Rhode Island last weekend, the South African-born inventor said: "AI is a rare case where I think we need to be pro-active in regulation instead of re-active.

"Until people see robots going down the street killing people, they don't know how to react because it seems so ethereal."

He added: "AI is a fundamental risk to the existence of human civilisation."

He also said AI would have a substantial impact on jobs as "robots will be able to do everything better than us", adding that the transport sector, which he said accounted for 12 per cent of jobs in the US, would be "one of the first things to go fully autonomous".

Musk also talked of his "desire to establish interplanetary colonies on Mars" to act as safe havens if robots were to take over Earth, CleanTechnica reports.

To avoid that happening in the first place, he called on the the government to "learn as much as possible" and "gain insight" into how AI can be safely developed.

However, critics says Musk's remarks could be "distracting from more pressing concerns", writes Tim Simonite on Wired.

Ryan Calo, a cyber law expert at the University of Washington, told the website: "Artificial intelligence is something policy makers should pay attention to.

"But focusing on the existential threat is doubly distracting from its potential for good and the real-world problems it’s creating today and in the near term."

Create an account with the same email registered to your subscription to unlock access.

Sign up for Today's Best Articles in your inbox

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

-

Cicada-geddon: the fungus that controls insects like 'zombies'

Cicada-geddon: the fungus that controls insects like 'zombies'Under The Radar Expert says bugs will develop 'hypersexualisation' despite their genitals falling off

By Chas Newkey-Burden, The Week UK Published

-

'Voters know Biden and Trump all too well'

'Voters know Biden and Trump all too well'Instant Opinion Opinion, comment and editorials of the day

By Harold Maass, The Week US Published

-

Is the Gaza war tearing US university campuses apart?

Is the Gaza war tearing US university campuses apart?Today's Big Question Protests at Columbia University, other institutions, pit free speech against student safety

By Joel Mathis, The Week US Published

-

AI is causing concern among the LGBTQ community

AI is causing concern among the LGBTQ communityIn the Spotlight One critic believes that AI will 'always fail LGBTQ people'

By Justin Klawans, The Week US Published

-

When even art is artificial

When even art is artificialOpinion The AI threat to human creativity

By William Falk Published

-

The push for media literacy in education amid the rise of AI

The push for media literacy in education amid the rise of AIIn the Spotlight A pair of congresspeople have introduced an act to mandate media literacy in schools

By Justin Klawans, The Week US Published

-

The complex environmental toll of artificial intelligence

The complex environmental toll of artificial intelligenceThe explainer AI is very much mostly not green technology

By Devika Rao, The Week US Published

-

Artificial history

Artificial historyOpinion Google's AI tailored the past to fit modern mores, but only succeeded in erasing real historical crimes

By Theunis Bates Published

-

AI is recreating the voices of mass shooting victims

AI is recreating the voices of mass shooting victimsThe Explainer The parents of these victims are using the AI to try and lobby Congress for gun control

By Justin Klawans, The Week US Published

-

The murky world of AI training

The murky world of AI trainingUnder the Radar Despite public interest in artificial intelligence models themselves, few consider how those models are trained

By Austin Chen, The Week UK Published

-

Is Google's new AI bot 'woke'?

Is Google's new AI bot 'woke'?Talking Points Gemini produced images of female popes and Black Vikings. Now the company has stepped back.

By Joel Mathis, The Week US Published